PlAwAnSaI

Administrator

- 'docker ps -a' to show containers status

- Up = Running

- To kill the container use 'docker kill [NAMES]'

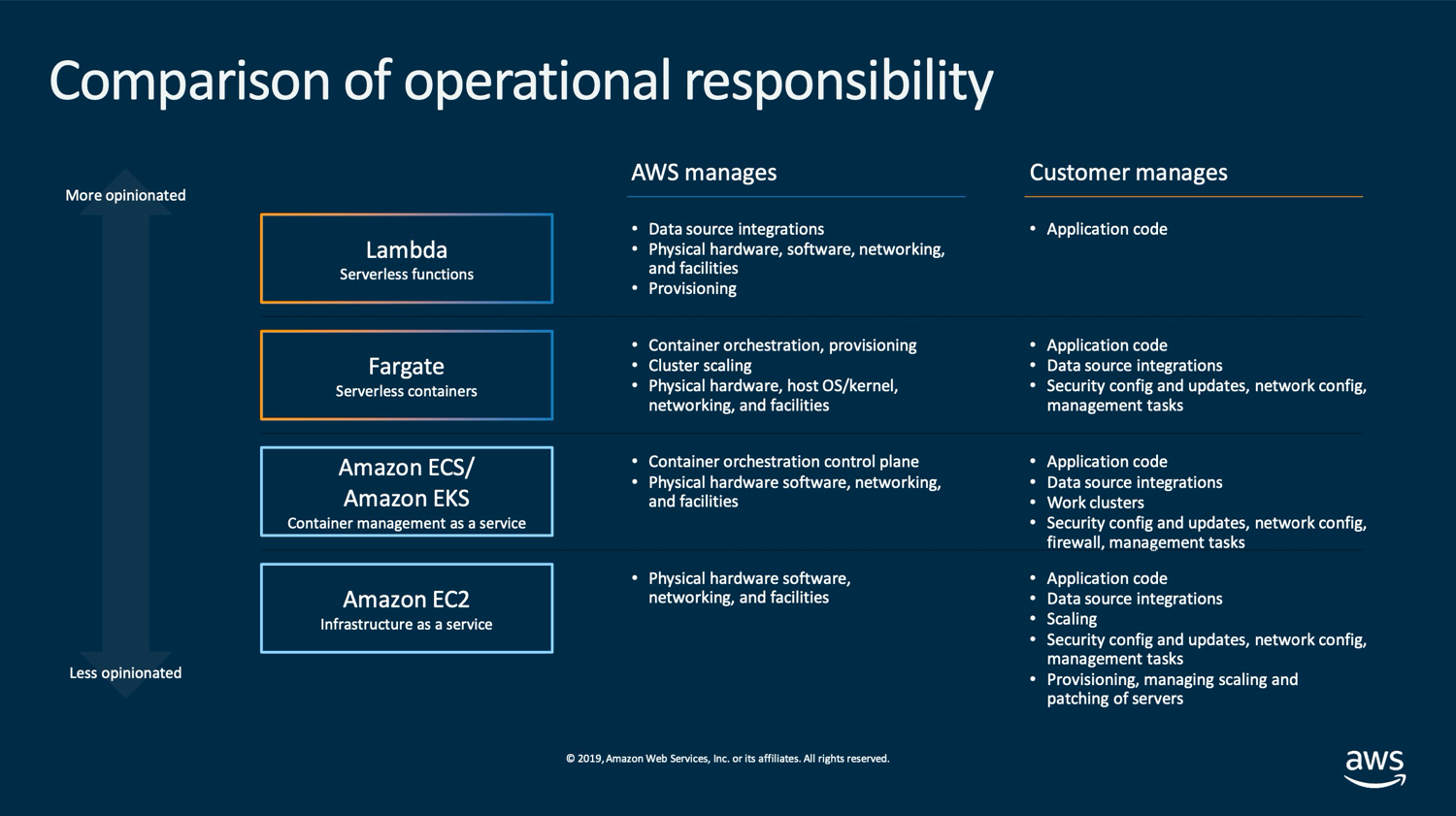

- Task roles is the best practice way of providing permissions to running containers on ECS.

- ECS Fargate mode should be used if want as little admin overhead as possible.

- ECS Service is used to configure scaling and HA for containers.

- 3 cluster modes are available within ECS:

- Network Only (Fargate)

- EC2 Linux + Networking

- EC2 Windows + Networking

.

- Docker is the only container platform supported by Amazon ECS at this time.

- Container images store in a container registry.

- The advantages/benefits of container are Fast to startup, Portable, and Lightweight.

Code:

https://learn-cantrill-labs.s3.amazonaws.com/awscoursedemos/0010-aws-associate-ec2-bootstrapping-with-userdata/A4L_VPC.yaml

Code:

https://learn-cantrill-labs.s3.amazonaws.com/awscoursedemos/0010-aws-associate-ec2-bootstrapping-with-userdata/userdata.txt

Code:

https://learn-cantrill-labs.s3.amazonaws.com/awscoursedemos/0010-aws-associate-ec2-bootstrapping-with-userdata/A4L_VPC_PUBLICINSTANCE.yaml

Code:

https://learn-cantrill-labs.s3.amazonaws.com/awscoursedemos/0011-aws-associate-ec2-instance-role/A4L_VPC_PUBLICINSTANCE_ROLEDEMO.yaml

Code:

https://learn-cantrill-labs.s3.amazonaws.com/awscoursedemos/0011-aws-associate-ec2-instance-role/lesson_commands.txt- A Dev has been asked to build a real-time dashboard web application to visualize the key prefixes and storage size of objects in Amazon S3 buckets. Amazon DynamoDB will be used to store the Amazon S3 metadata. The optimal and MOST cost-effective design to ensure that the real-time dashboard is kept up to date with the state of the objects in the Amazon S3 buckets is Use an Amazon CloudWatch event backed by an AWS Lambda function. Issue an Amazon S3 API call to get a list of all Amazon S3 objects and persist the metadata within DynamoDB. Have the web application poll the DynamoDB table to reflect this change.

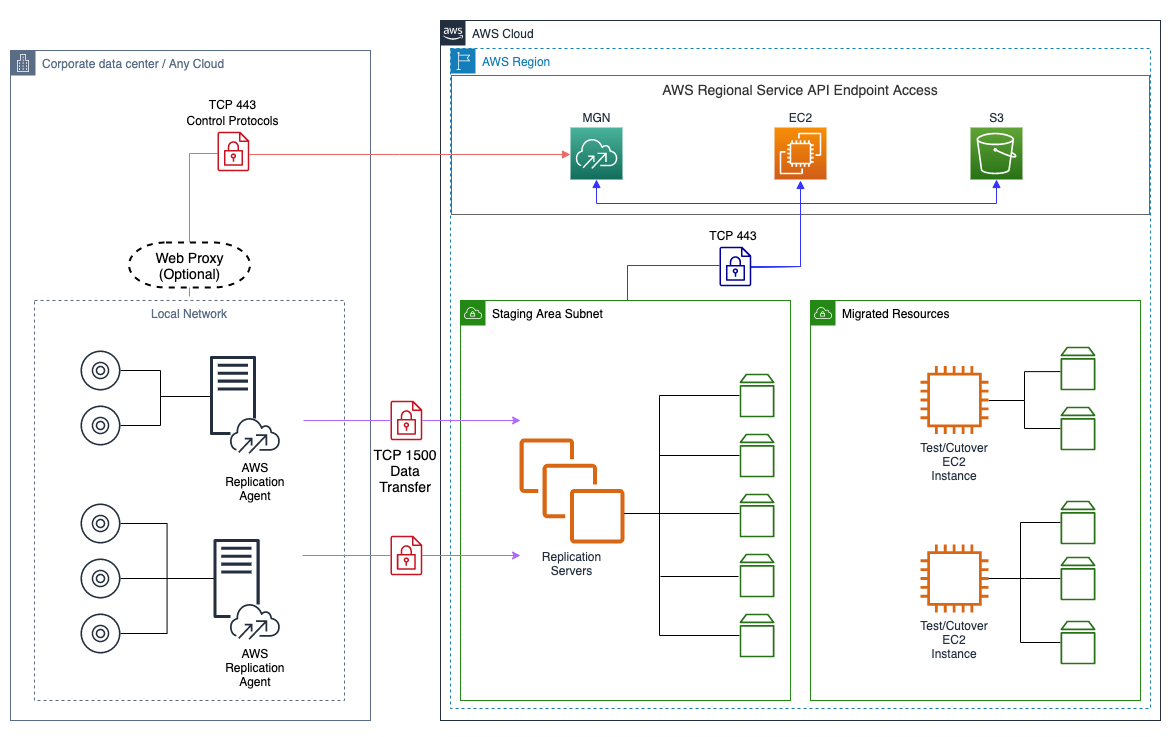

- An on-premises application is implemented using a Linux, Apache, Mysql, and Php (LAMP) stack. The Dev wants to run this application in AWS. Amazon EC2 and Aurora can be used to run this stack.

- A dev is building a backend system for the long-term storage of information from an inventory management system. The information needs to be stored so that other teams can build tools to report and analyze the data. To achieve the FASTEST running time the dev should Create an AWS Lambda function that writes to Amazon S3 synchronously. Set the inventory system to retry failed requests.

- A Dev is working on an application that handles 10MB documents that contain highly-sensitive data. The application will use AWS KMS to perform client-side encryption. Should Invoke the GenerateDataKey API to retrieve the plaintext version of the data encryption key to encrypt the data.

GenerateDataKey API: Generates a unique data key. This operation returns a plaintext copy of the data key and a copy that is encrypted under a Customer Master Key (CMK) that specify. Can use the plaintext key to encrypt data outside of KMS and store the encrypted data key with the encrypted data.

- A dev is using AWS CodeDeploy to deploy an application running on Amazon EC2. The dev wants to change the file permissions for a specific deployment file. To meet this requirement a dev should use AfterInstall lifecycle event.

- An application ingests a large number of small messages and stores them in a database. The application uses AWS Lambda. A dev team is making changes to the application's processing logic. In testing, it is taking more than 15 mins to process each message. The team is concerned the current backend may time out. To ensure each message is processed in the MOST scalable way to the backend system should Add the messages to an Amazon SQS queue. Set up an Amazon EC2 instance to poll the queue and process messages as they arrive.

- A dev has discovered that an application responsible for processing messages in an Amazon SQS queue is routinely falling behind. The application is capable of processing multiple messages in one execution, but is only receiving one message at a time. To increase the number of messages the application receives the dev should Call the ChangeMessageVisibility API for the queue and set MaxNumberOfMessages to a value greater than the default of 1.

- A dev is writing an AWS Lambda function. The dev wants to log key events that occur during the Lambda function and include a unique identifier to associate the events with a specific function invocation. To help the dev accomplish this objective should Obtain the request identifier from the Lambda context object. Architect the application to write logs to the console.

- A dev is trying to monitor an application's status by running a cron job that returns 1 if the service is up and 0 if the service is down. The dev created code that uses an AWS CLI put-metric-alarm command to publish the custom metrics to Amazon CloudWatch and create an alarm. However the dev is unable to create an alarm as the custom metrics do not appear in the CloudWatch console. This issue cause from The dev needs to use the put-metric-data command.

- A company runs an e-commerce website that uses Amazon DynamoDB where pricing for items is dynamically updated in real time. At any given time, multiple updates may occur simultaneously for pricing information on a particular product. This is causing the original editor's changes to be overwritten without a proper review process. To prevent this overwriting should use DynamoDB Conditional writes option.

- Company C provides an online image recognition service and utilizes SQS to decouple system components for scalability. The SQS consumers poll the imaging queue as often as possible to keep end-to-end throughput as high as possible. However, Company C is realizing that polling in tight loops is burning CPU cycles and increasing costs with empty responses. Company C can reduce the number of empty responses by Set the Imaging queue ReceiveMessageWaitTimeSeconds attribute to 20 sec.

- A dev has created a REST API using Amazon API Gateway. The dev wants to log who and how each caller accesses the API. The dev also wants to control how long the logs are kept. To meet these requirements the dev should Enable API Gateway execution logging. Delete old logs using API Gateway retention settings.

- Company D is currently hosting their corporate site in an Amazon S3 bucket with Static Website Hosting enabled. Currently, when visitors go to http://www.companyd.com the index.html page is returned. Company D now would like a new page welcome.html to be returned when a visitor enters http://www.companyd.com in the browser. The steps will allow Company D to meet this requirement are:

- Upload an html page named welcome.html to their S3 bucket

- Set the Index Document property to welcome.html.

.

- A company is launching an ecommerce website and will host the static data in Amazon S3. The company expects approximately 1,000 Transactions Per Second (TPS) for GET and PUT requests in total. Logging must be enabled to track all requests and must be retained for auditing purposes. The MOST cost-effective solution is Enable AWS CloudTrail logging for the S3 bucket-level action and create a lifecycle policy to expire the data in 90 days.

Last edited:

/00_LearningAids/CloudFormationLogicalAndPhysicalResources.png)

/00_LearningAids/CloudFormationLogicalAndPhysicalResources2.png)

/00_LearningAids/CloudFormationTemplateParameters.png)

/00_LearningAids/CloudFormationPseudoParameters.png)

/00_LearningAids/CloudFormationFunctions5.png)

/00_LearningAids/CloudFormationFunctions4.png)

/00_LearningAids/CloudFormationFunctions3.png)

/00_LearningAids/CloudFormationFunctions2.png)

/00_LearningAids/CloudFormationFunctions1.png)

/00_LearningAids/CloudFormationMappings.png)

/00_LearningAids/CloudFormationOutputs.png)

/00_LearningAids/CloudFormationConditions.png)

/00_LearningAids/CloudFormationDependsOn.png)

/00_LearningAids/CloudFormationCreationPolicy.png)

/00_LearningAids/CloudFormationWaitCondition.png)

/00_LearningAids/CloudFormation-NestedStacks.png)

/00_LearningAids/CloudFormationCrossStackReferences.png)

/00_LearningAids/CloudFormationCrossStackReferences2.png)

/00_LearningAids/CloudFormationStackSets.png)

/00_LearningAids/CloudFormationDeletionPolicy.png)

/00_LearningAids/CloudFormationStackRoles.png)

/00_LearningAids/CloudFormationCFNINIT.png)

/00_LearningAids/CloudFormationCFNHUP.png)

/00_LearningAids/CloudFormationChangeSets.png)

/00_LearningAids/CloudFormationCustomResources.png)