PlAwAnSaI

Administrator

- ติดตั้งใช้ Instance Run Web Server ใน Subnet ใน VPC เมื่อพยายามเชื่อมต่อ Instance ผ่าน Browser โดยใช้ HTTP ทาง Internet พบว่า Connection Timeout สามารถตรวจสอบได้โดย:

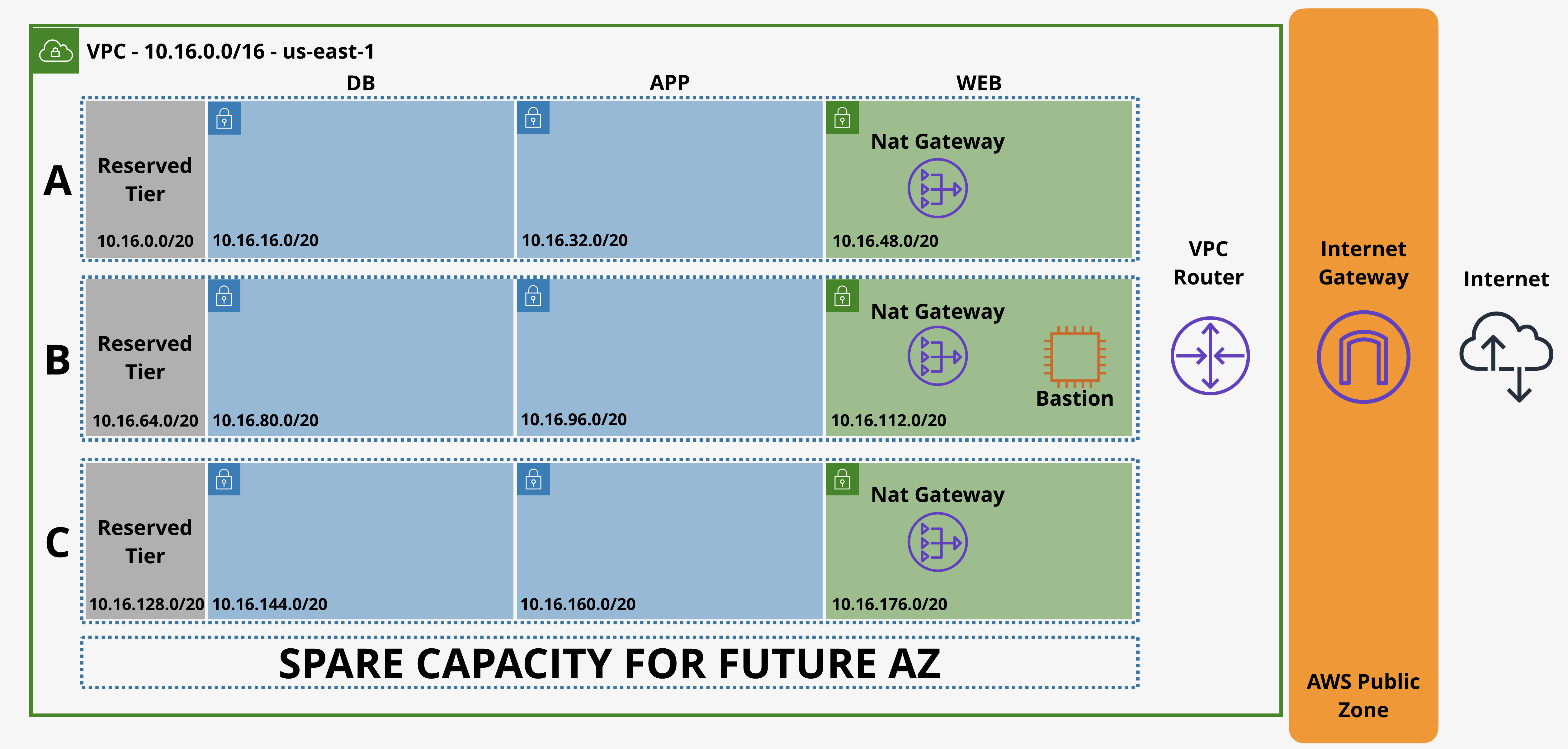

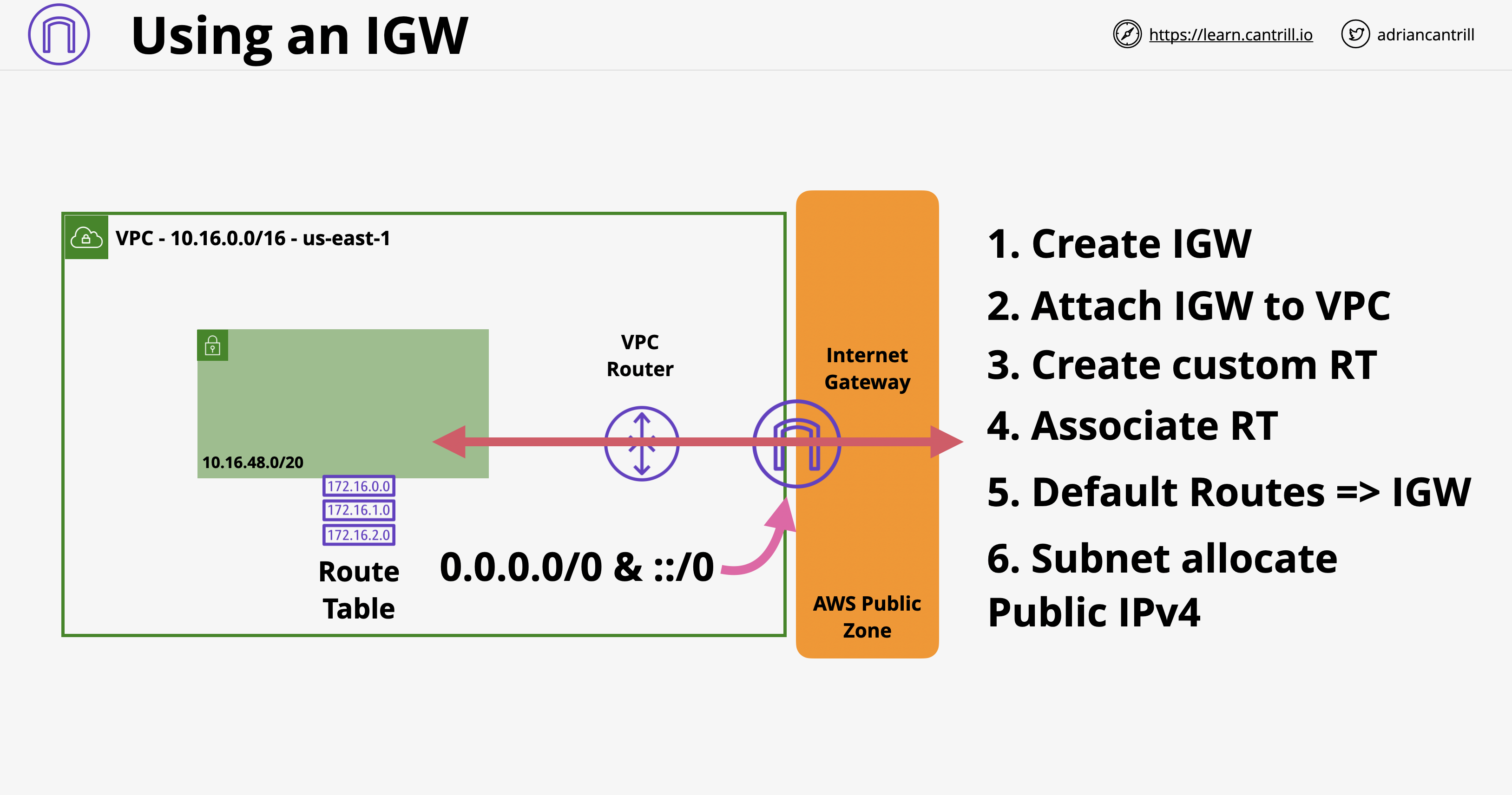

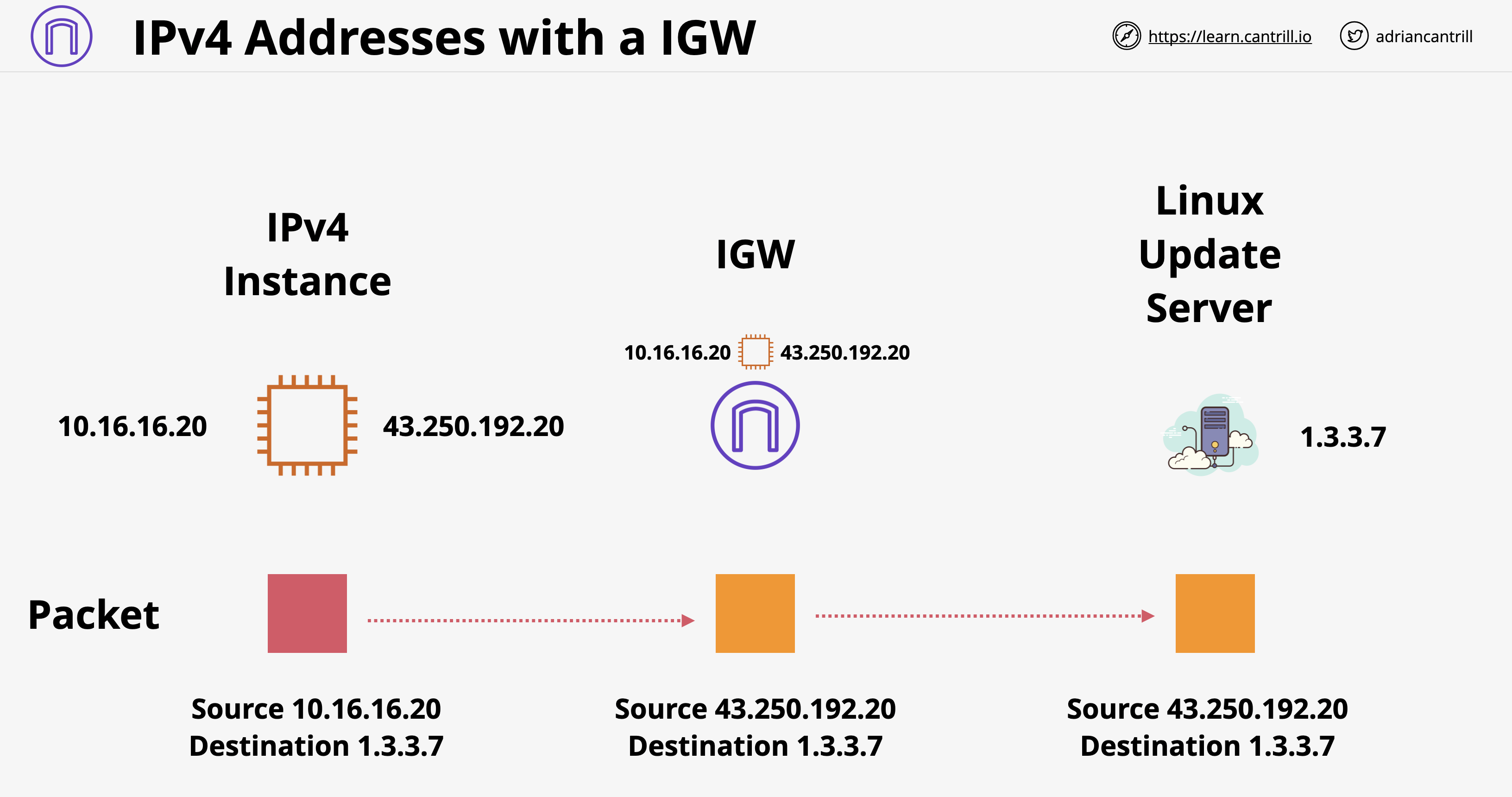

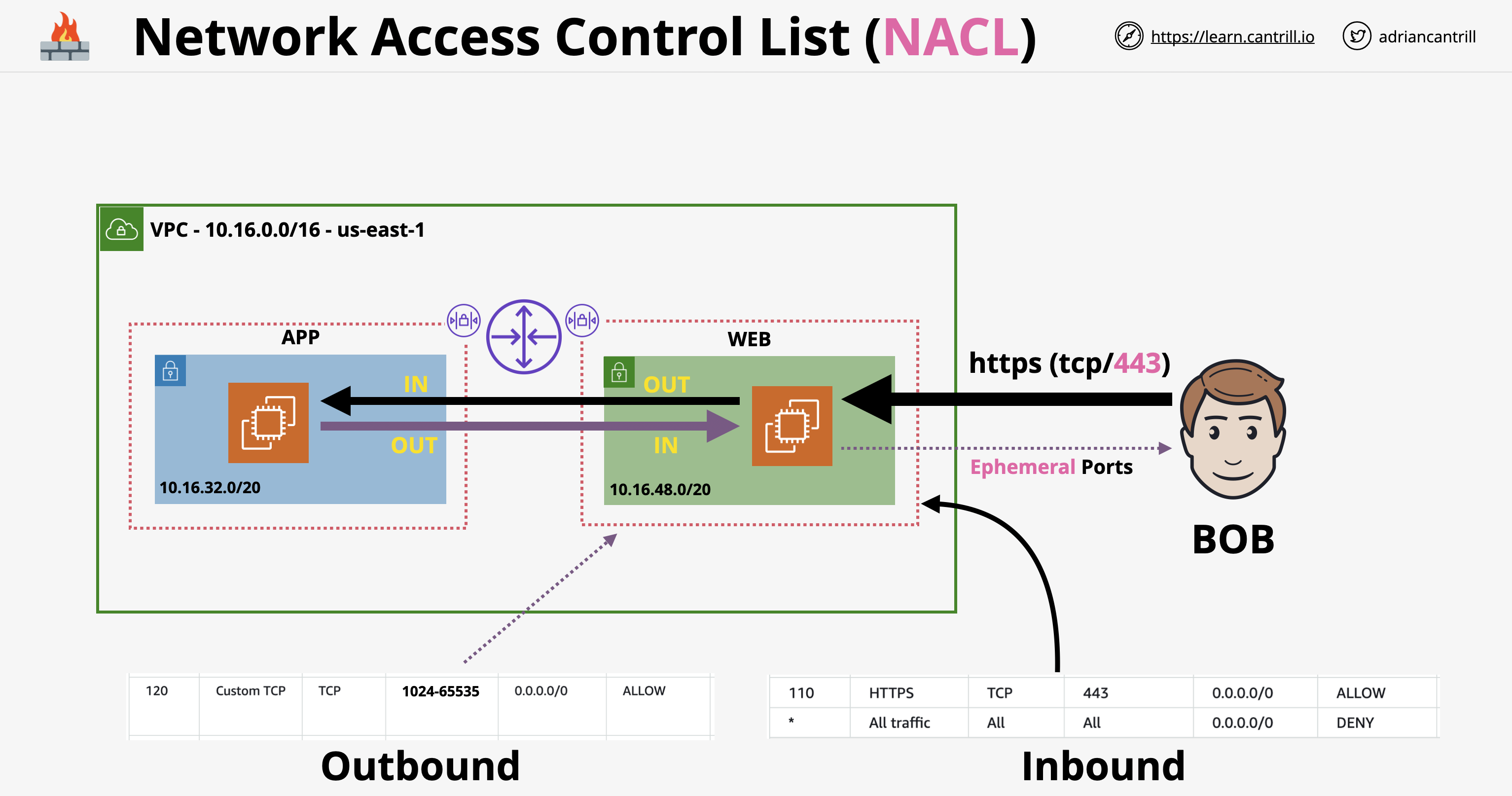

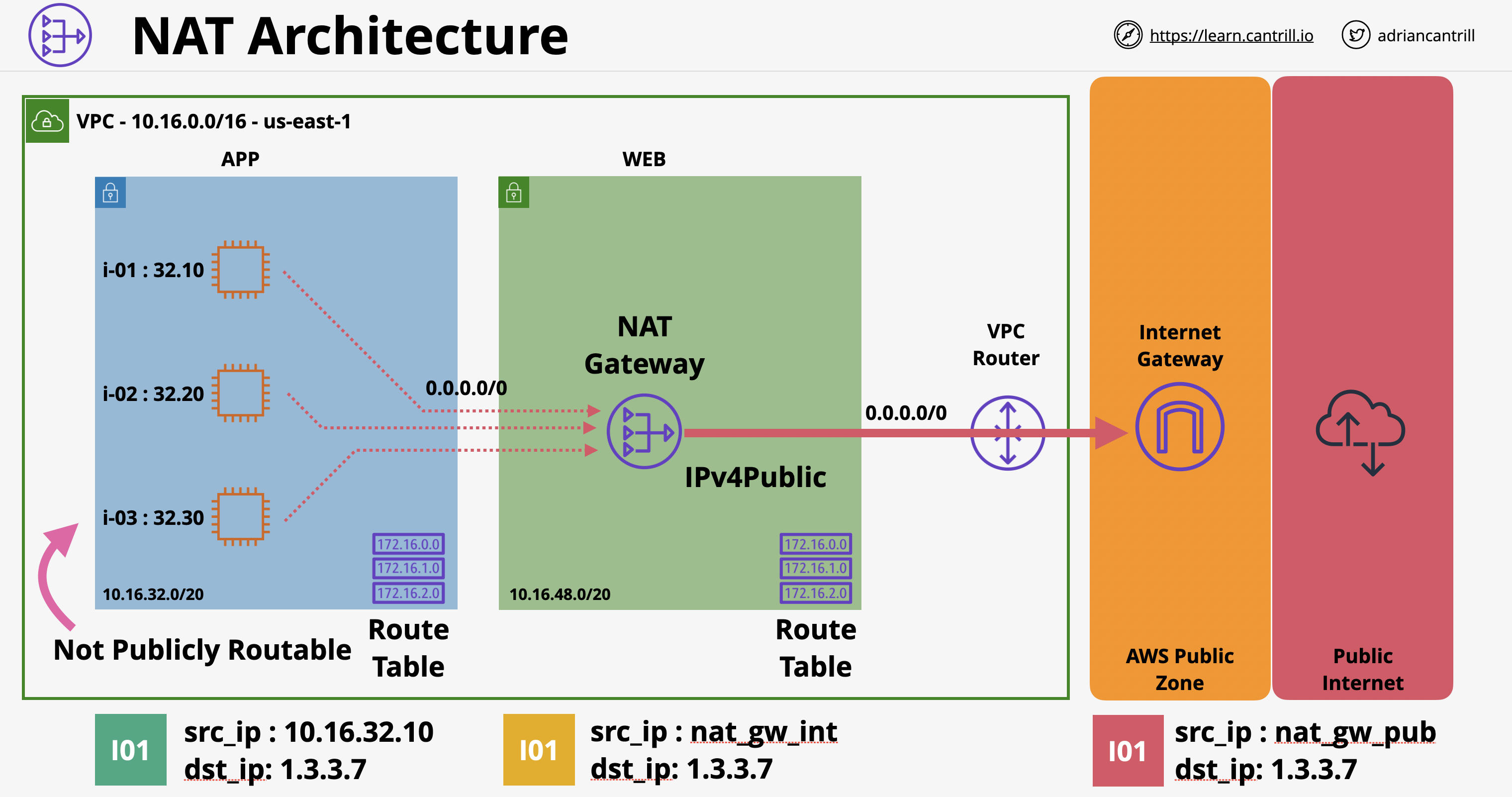

- ตรวจสอบว่า VPC มี Internet Gateway และ Default Route 0.0.0.0/0 ชี้ไปหา Internet Gateway

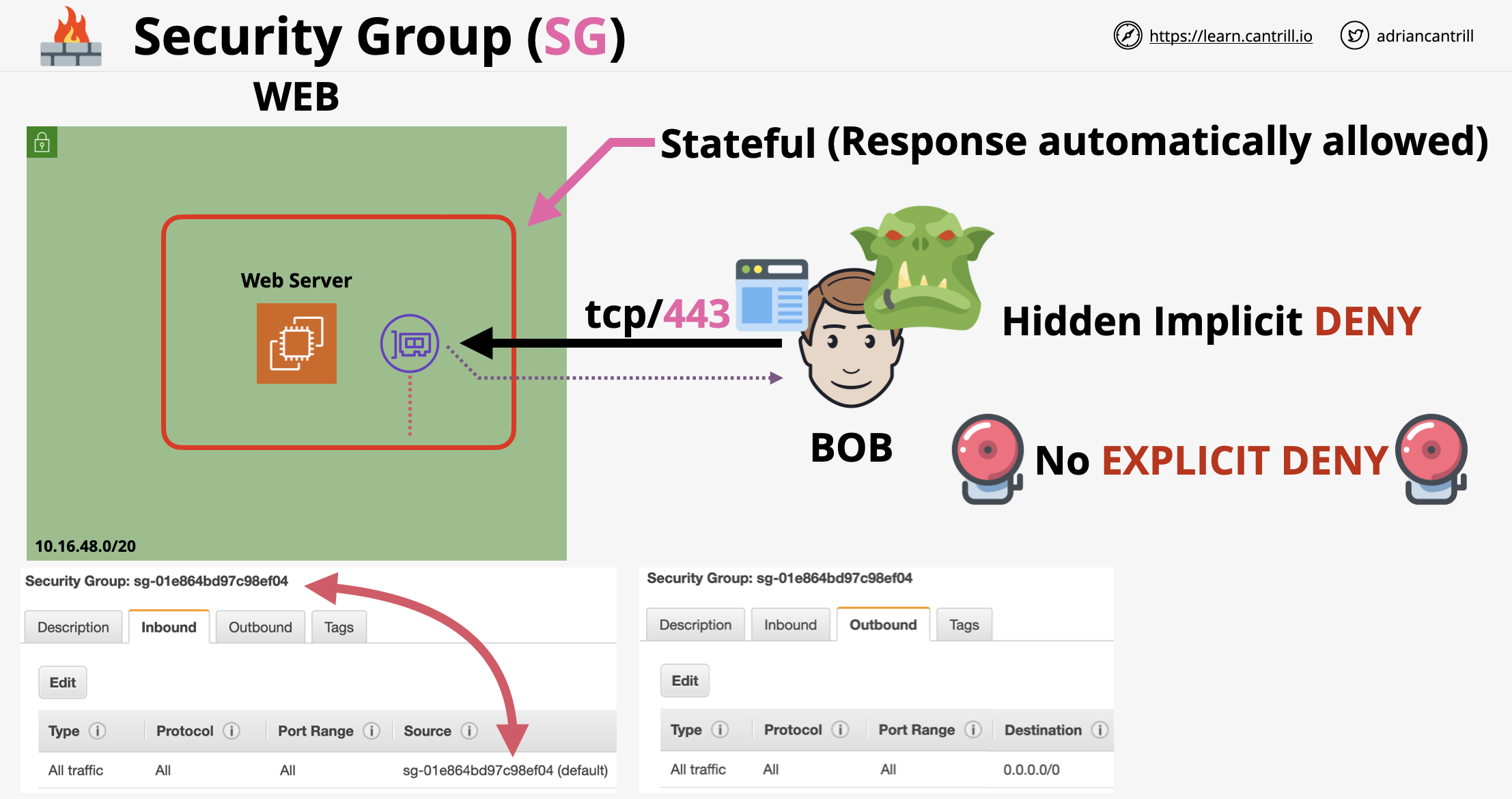

- ตรวจสอบว่า Security Group อนุญาตให้มีการเข้าถึงขาเข้า Port 80

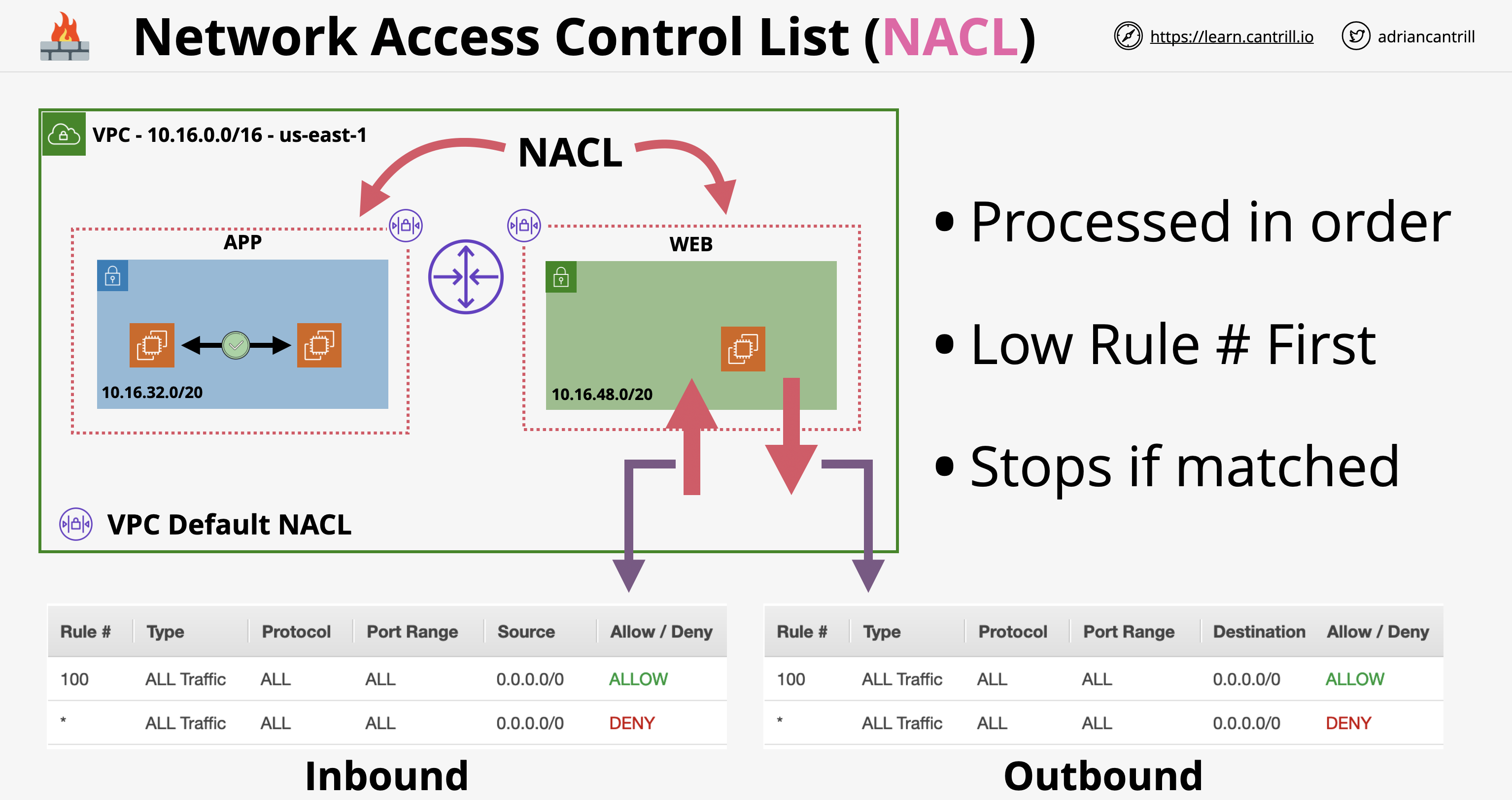

- ตรวจสอบว่า Network ACL อนุญาตให้มีการเข้าถึงขาเข้า Port 80

- ตัวอย่างการดำเนินการที่สามารควบคุมได้ด้วย IAM Policy:

- การกำหนดค่า Security Group ของ VPC

- การสร้างฐานข้อมูล RDS สำหรับ Oracle

- การสร้าง Bucket Amazon S3

- ต้องการสร้างกลุ่ม Amazon EC2 Instance ใน Application Tier Subnet ที่เปิดการรับส่งข้อมูลจาก Instance ใน Web Tier ผ่าน HTTP เท่านั้น (กลุ่มของ Instance ต่าง Subnet Share Security Group Web Tier กัน) สามารถทำได้โดยการเชื่อมโยงแต่ละ Instance ใน Application Tier กับ Security Group ที่อนุญาตการรับส่งข้อมูลผ่าน HTTP ขาเข้าจาก Security Group Web Tier

- ต้อง Run งานแบบ Batch ทุกคืนวันอาทิตย์ งานเสร็จสิ้นภายในเวลาไม่ถึง 90 นาที และไม่สามารถเลื่อนเวลางานออกไปได้ ควรใช้รูปแบบการชำระเงินสำหรับ EC2 แบบ Scheduled Instance

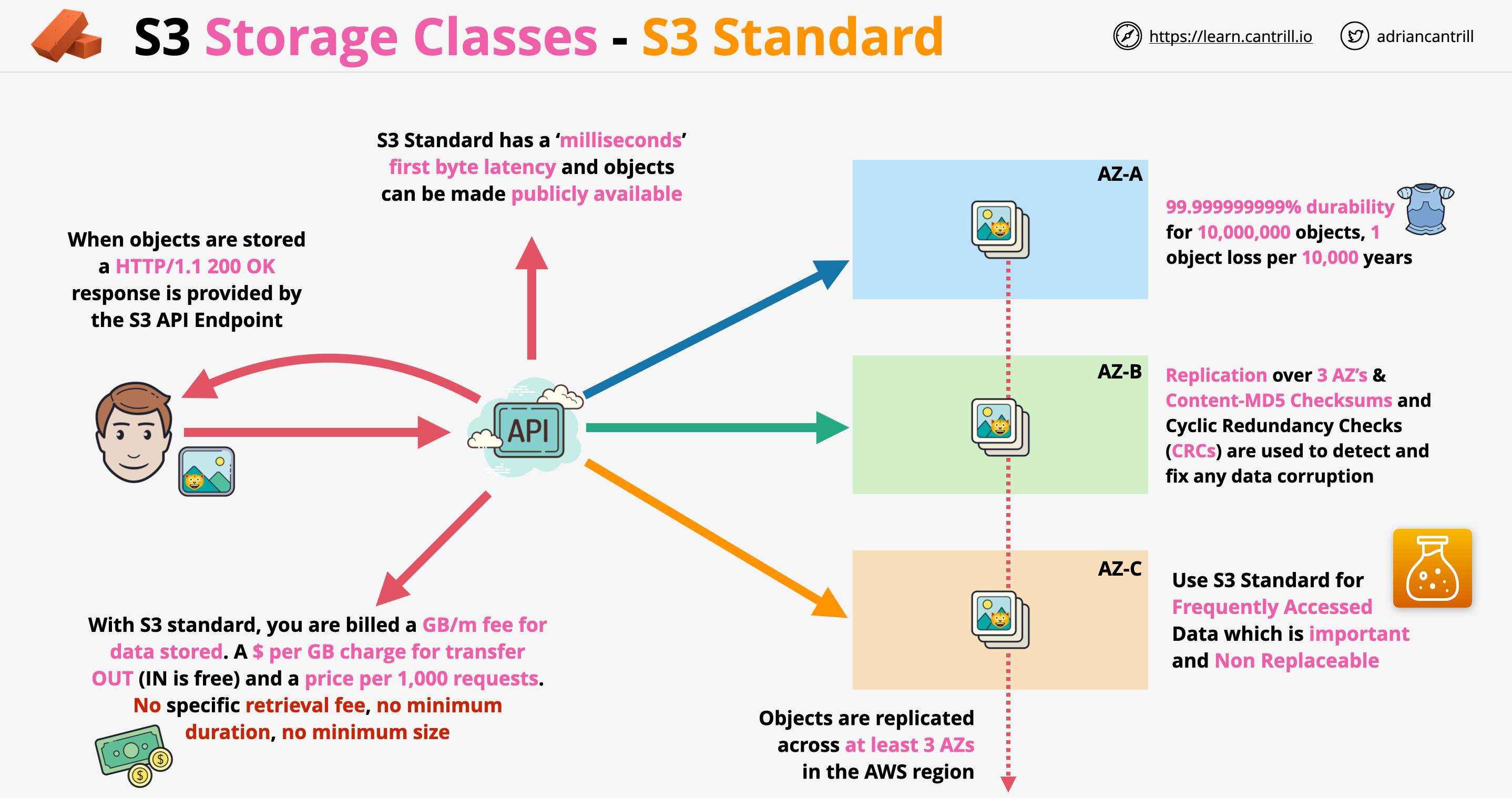

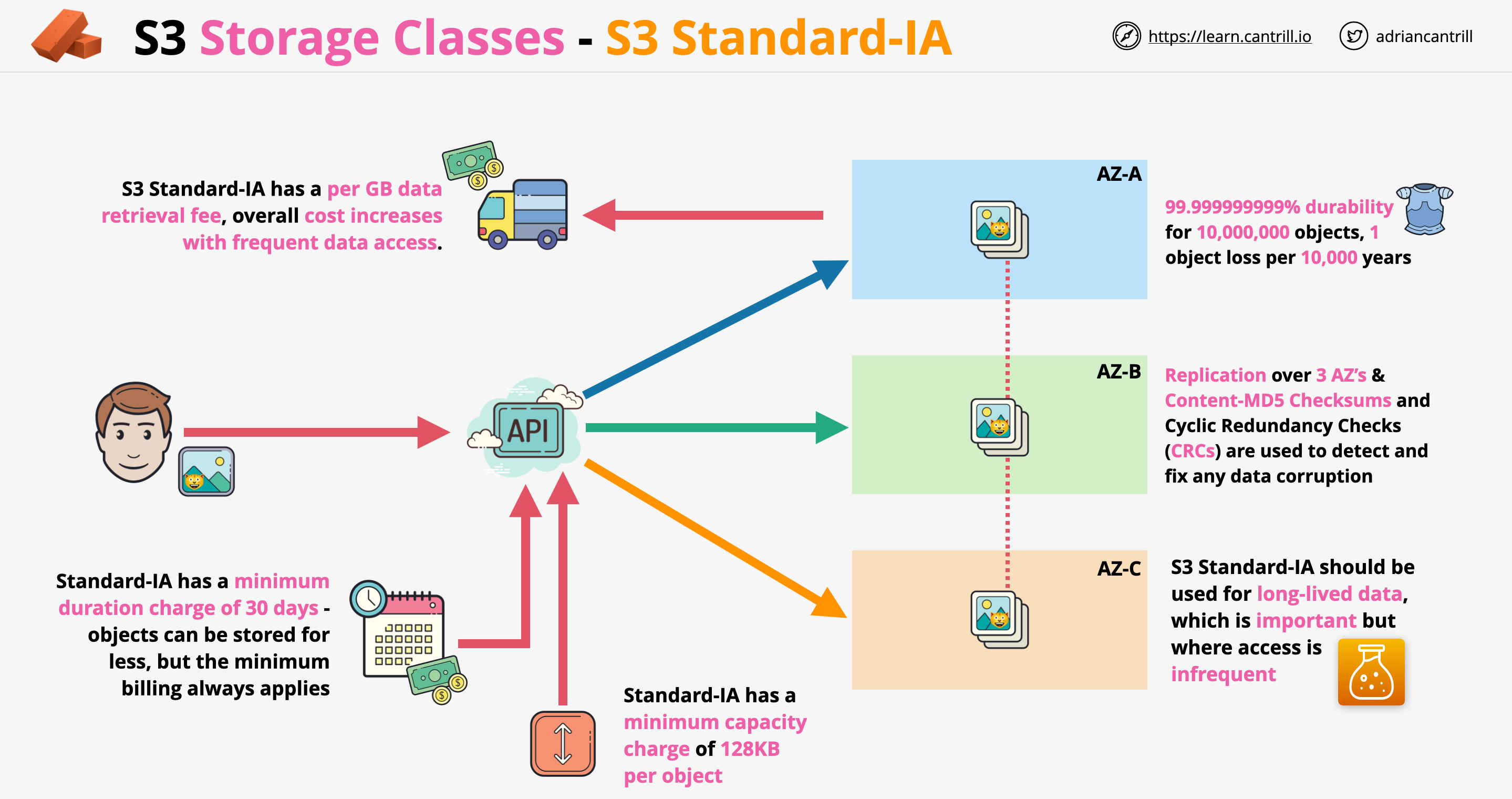

- ได้รับคำขอให้ทำให้ File PDF พร้อมใช้งานต่อสาธารณะบน Web, File นี้จะถูก Download โดยลูกค้าผ่านทาง Browser นับล้านครั้ง ควรจัดเก็บ File ใน S3 Standard

- S3 can host static websites and have them accessible on the www

- The website URL will be:

- .s3-website-.amazonaws.com

- If get a 403 (Forbidden) error, make sure the bucket policy allows public reads!

- Has two applications: a sender application that sends messages with payloads to be processed and a processing application intended to receive the messages with payloads. The company wants to implement an AWS service to handle messages between the two applications. The sender application can send about 1,000 messages each hour. The messages may take up to 2 days to be processed. If the messages fail to process, they must be retained so that they do not impact the processing of any remaining messages. Integrate the sender and processor application with an Amazon Simple Queue Service (Amazon SQS) queue. Configure a dead-letter queue to collect the messages that failed to process is the MOST operationally efficient.

- Recently signed a contract with an AWS Managed Service Provider (MSP) Partner for help with an application migration initiative. A solution architect needs to share an Amazon Machine Image (AMI) from an existing AWS account with the MSP Partner's AWS account. The AMI is backed by Amazon Elastic Block Store (Amazon EBS) and uses a customer managed Customer Master Key (CMK) to encrypt EBS volume snapshots. The MOST secure way for the solution architect to share the AMI with the MSP Partner's AWS account is Make the encrypted AMI and snapshots publicly available. Modify the CMK's key policy to allow the MSP Partner's AWS account to use the key.

- Needs a storage solution for an application that runs on a High Performance Computing (HPC) cluster. The cluster is hosted on AWS Fargate for Amazon Elastic Container Service (Amazon ECS). The company needs a mountable file system that provides concurrent access to files while delivering hundreds of GBps of throughput at sub-millisecond latency. Should Create an Amazon FSx for Lustre file share for the application data. Create an IAM role that allows Fargate to access the FSx for Lustre file share.

- Has an ecommerce application that stores data in an on-premises SQL database. The company has decided to migrate this database to AWS. However, as part of the migration, the company wants to find a way to attain sub-millisecond responses to common read requests. A solution architect knows that the increase in speed is paramount and that a small percentage of stale data returned in the database reads is acceptable. The solution architect should recommend Build a database cache using Amazon ElastiCache.

- Has an application that calls AWS Lambda functions. A recent code review found database credentials stored in the source code. The database credentials needs to be removed from the Lambda source code. The credentials must then be securely stored and rotated on an on-going basis to meet security policy requirements. A solution architect should recommend Store the password in AWS Secrets Manager. Associate the Lambda function with a role that can retrieve the password from secrets Manager given its secret ID.

- A social media company is building a feature for its website. The feature will give users the ability to upload photos. The company expects significant increases in demand during large events and must ensure that the website can handle the upload traffic from users. Generate Amazon S3 presigned URLs in the application. Upload files directly from the user's browser into an S3 bucket is the MOST scalability.

- Hosts historical weather records in Amazon S3. The records are downloaded from the company's website by way of a URL that resolves to a domain name. Users all over the world access this content through subscriptions. A third-party provider hosts the company's root domain name, but the company recently migrated some of its services to Amazon Route 53. The company wants to consolidate contracts, reduce latency for users, and reduce costs related to serving the application to subscribers. Should Create an A record in a Route 53 hosted zone for the application. Create a Route 53 traffic policy for the web application, and configure a geolocation rule. Configure health checks to check the health of the endpoint and route DNS queries to other endpoints if an endpoint is unhealthy.

- www.careers360.com/courses-certifications/articles/8-must-have-skills-for-aws-cloud-architects

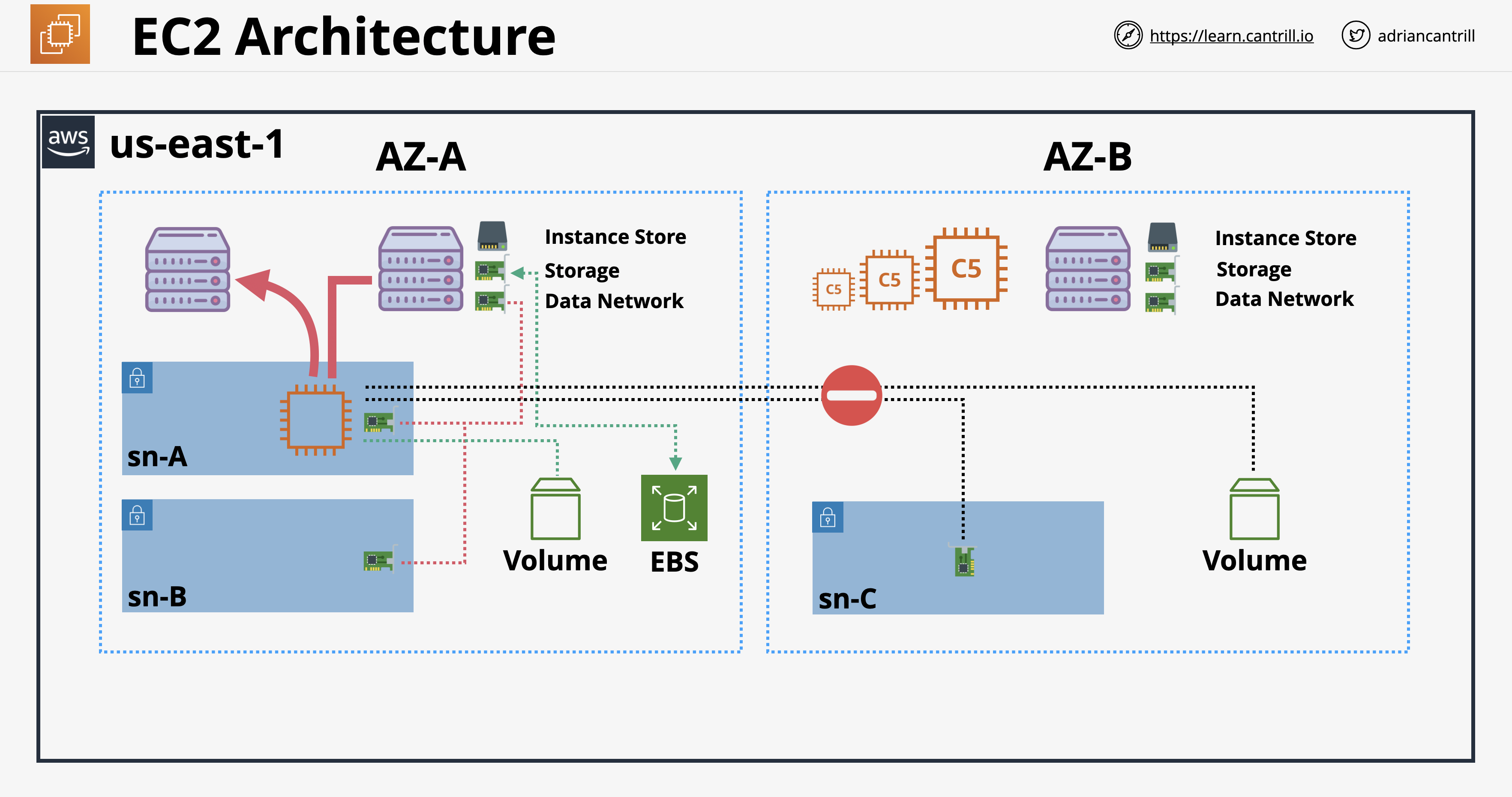

- https://learn.cantrill.io สอนทั้งทฤษฎีและปฏิบัติ ไม่ได้เรียนเพื่อสอบผ่านเท่านั้น

- เน้นสอบโดยเฉพาะ เรียนใน Udemy https://courses.datacumulus.com ของ Stéphane Maarek

- ไม่แนะนำ A Cloud Guru

- ถ้าเรียนฟรีบน Youtube https://www.youtube.com/c/Freecodecamp/search?query=aws

- License Microsoft - Volume, CAL, SPLA อธิบายง่ายๆ ให้อ่านรอบเดียวเข้าใจ:

blog.cloudhm.co.th/license-microsoft-volume-cal-spla

- เข้าใจ Windows Server License ง่ายๆ จบใน 5 นาที:

blog.cloudhm.co.th/windows-server-license-in-5-minutes

- SQL Server License เลือกง่ายๆ ประหยัดได้หลายแสน!!:

blog.cloudhm.co.th/sql-server-license

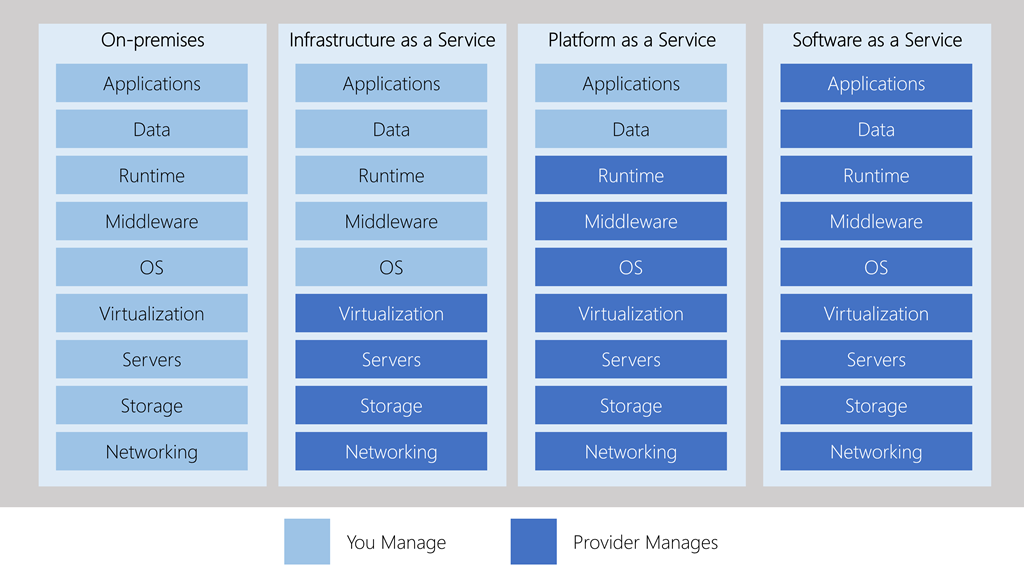

- Cloud Server กับ Virtual Private Server (VPS) แตกต่างกันอย่างไร?:

blog.cloudhm.co.th/cloud-server-vs-vps

- DNS คืออะไร? และมีความสำคัญอย่างไรต่อระบบของคุณ?:

blog.cloudhm.co.th/what-is-dns

- Backup ต่างกับ Disaster Recovery และ High Availability อย่างไร?:

blog.cloudhm.co.th/backup-vs-disaster-recovery-vs-high-availability

- Drone ในยุค 4.0 กับความสามารถที่อาจทำให้แปลกใจ:

blog.cloudhm.co.th/drone-4-0

- cloudDR คืออะไร?:

blog.cloudhm.co.th/cloud-dr

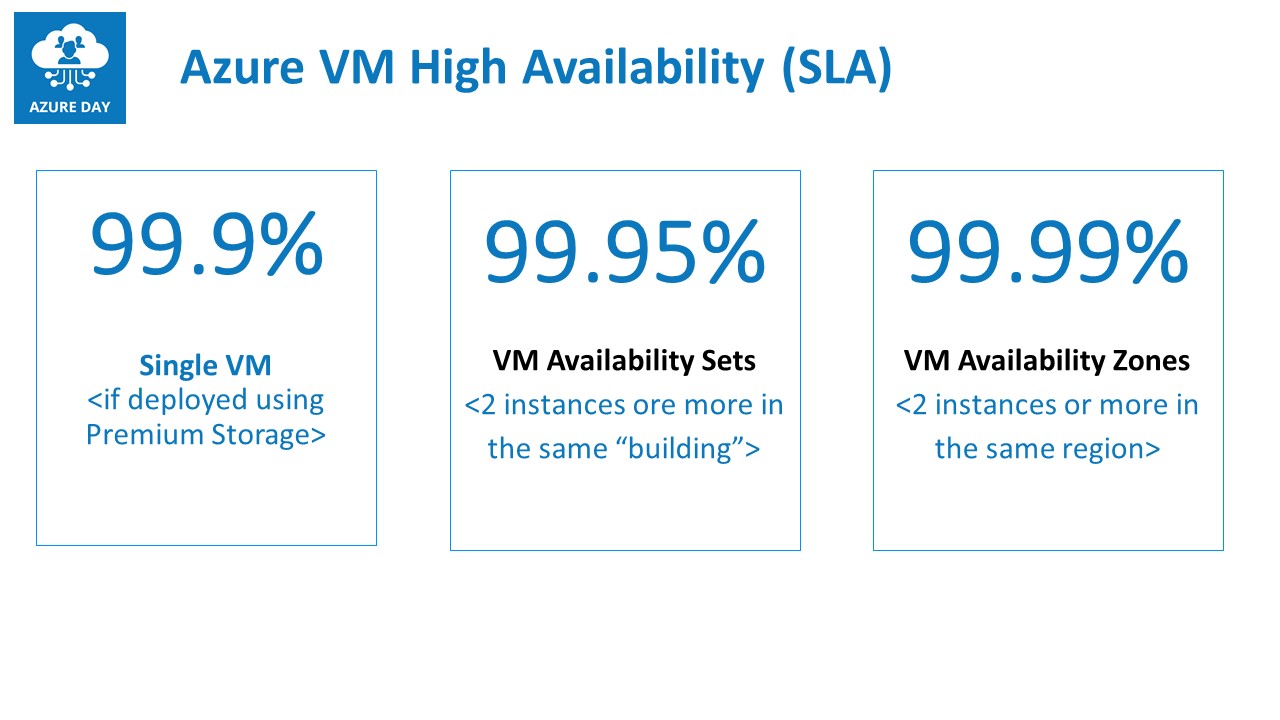

- SLA คืออะไร?:

blog.cloudhm.co.th/what-is-sla

- High Availability (HA) คืออะไร?:

blog.cloudhm.co.th/high-availability

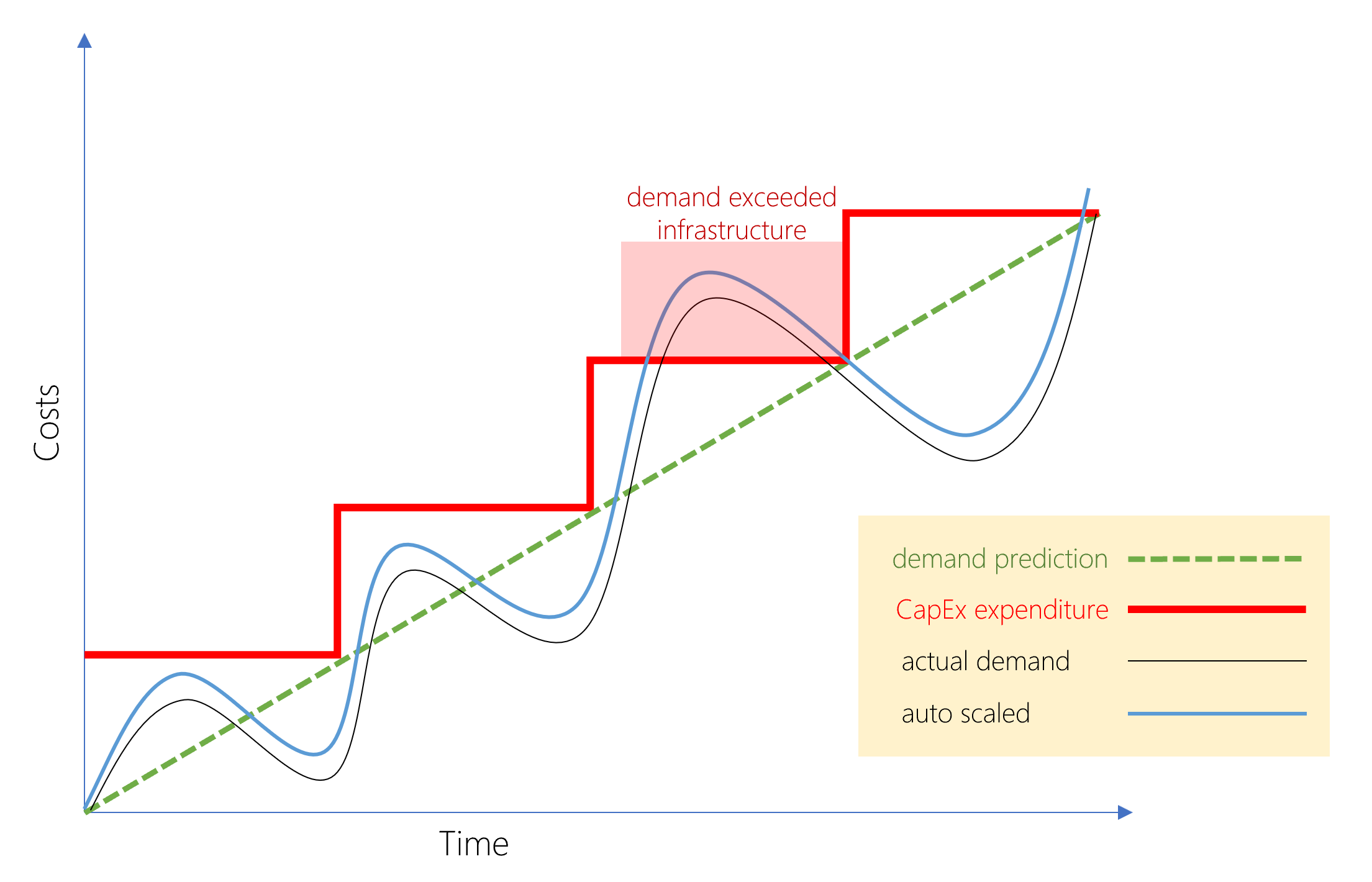

- AWS vs Domestic Cloud ค่าใช้จ่ายลับๆ ที่อาจนึกไม่ถึง:

blog.cloudhm.co.th/aws-vs-domestic-cloud

- Data Center คืออะไร? มาตรฐาน Tier ที่อาจจะยังไม่รู้!!:

blog.cloudhm.co.th/data-center-tier

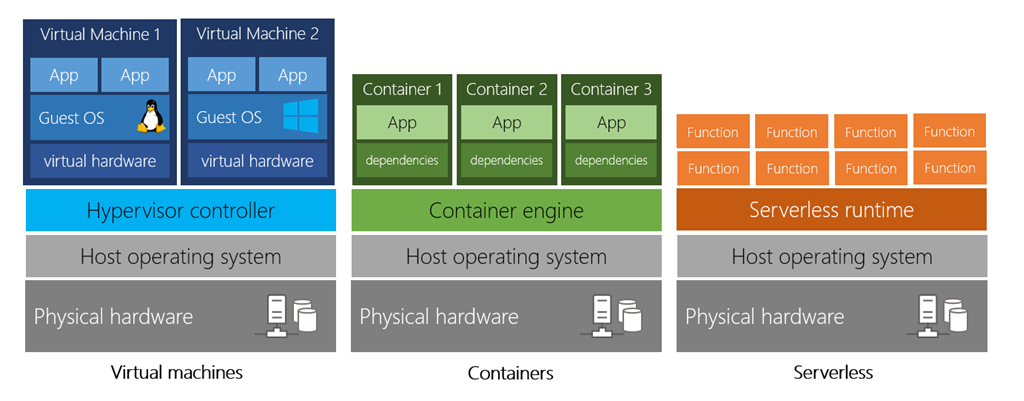

- วิวัฒนาการของ IT Infrastructure และ Hyper-Converged Infrastructure (HCI):

blog.cloudhm.co.th/it-infrastructure-and-hci