PlAwAnSaI

Administrator

2+

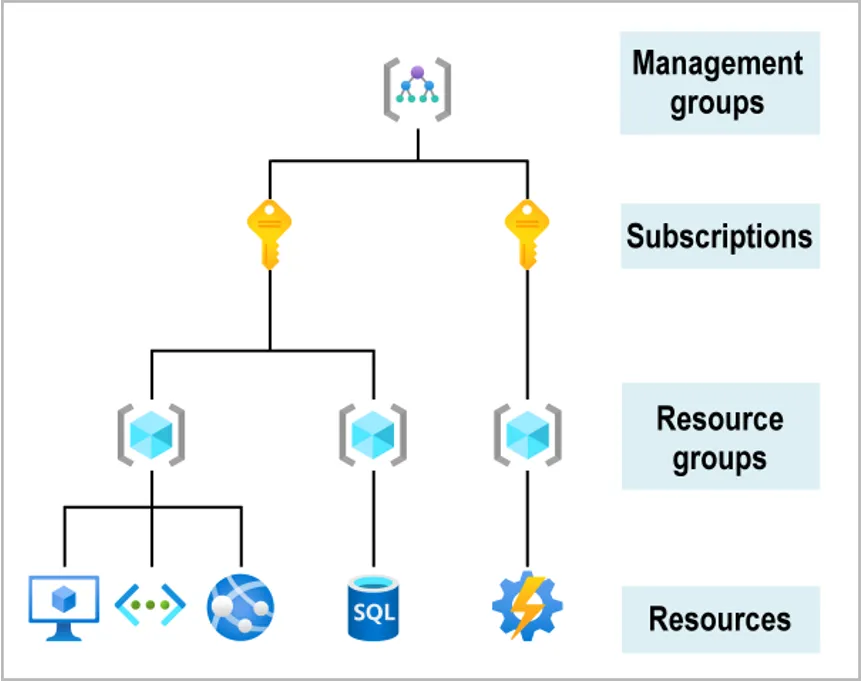

- A company uses AWS Organizations with a single OU named Production to manage multiple accounts. All accounts are members of the Production OU. Admins use deny list SCPs in the root of the organization to manage access to restricted services.

The company recently acquired a new business unit and invited the new unit's existing AWS account to the organization. Once onboarded, the admins of the new business unit discovered that they are not able to update existing AWS Config rules to meet the company's policies.

To allow admins to make changes and continue to enforce the current policies without introducing additional long-term maintenance should Create a temporary new OU named Onboarding for the new account. Apply/assign an SCP to the Onboarding OU to allow AWS Config actions. Move the organization's root SCP to the Production OU. Move the new account to the Production OU when adjustments to AWS Config are complete/done.

An SCP at a lower level can't add a permission after it is blocked by an SCP at a higher level. SCPs can only filter; they never add permissions.

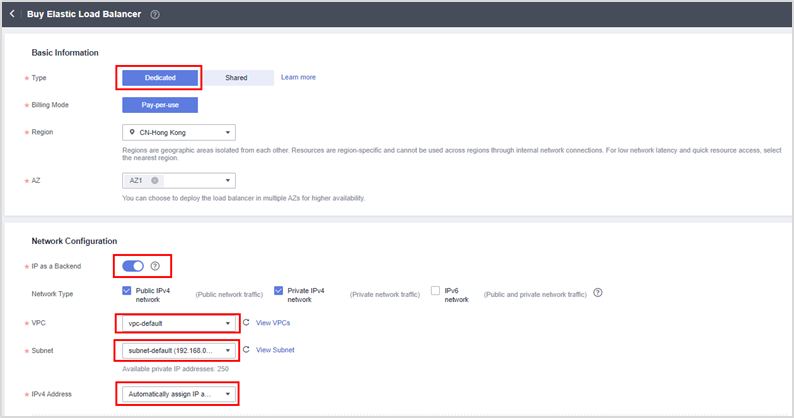

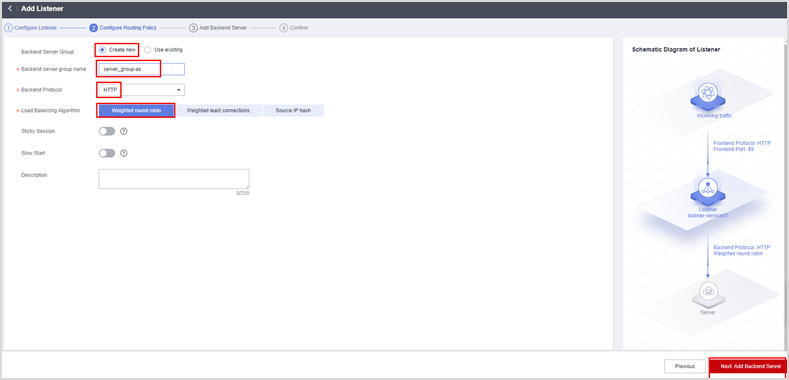

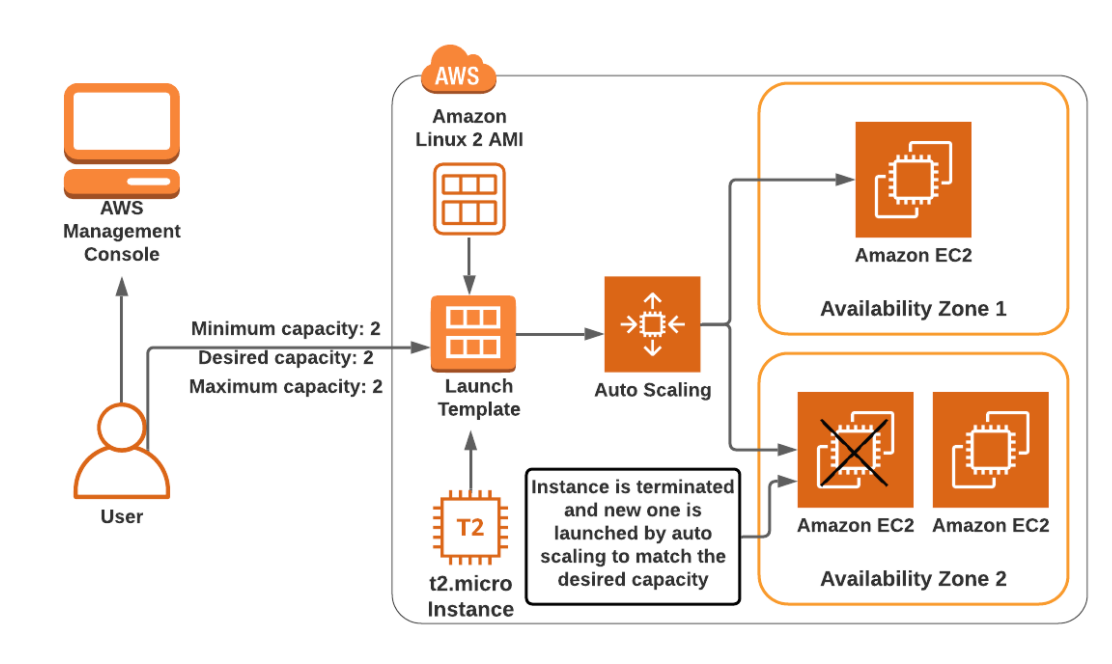

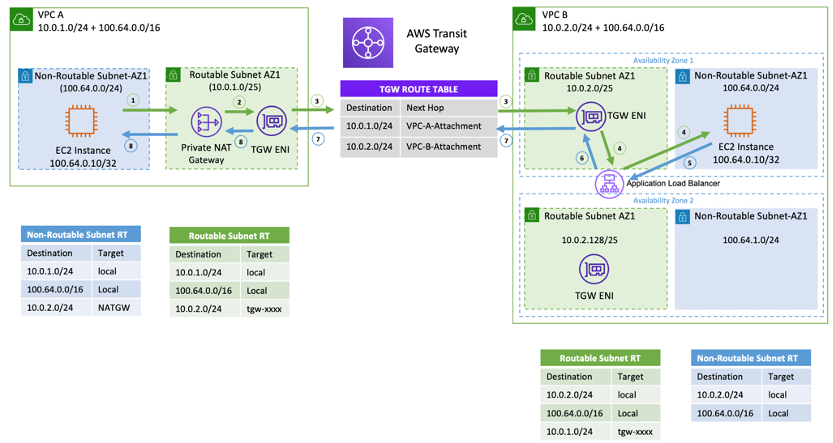

- A company is running a two-tier web-based app in an on-premises data center. The app layer consists of a single server running a stateful app. The app connects to a PostgreSQL DB running on a separate server. The app's user base is expected to grow significantly, so the company is migrating the app and DB to AWS. The solution will use Amazon Aurora PostgreSQL, Amazon EC2 Auto Scaling, and Elastic Load Balancing (ELB).

Solution will provide a consistent user experience that will allow the app and DB tiers to scale is Enable Aurora Auto Scaling for Aurora Replicas. Use an Application Load Balancer (ALB) with the round robin routing and sticky sessions enabled.

- A company uses a service to collect metadata from apps that the company hosts on premises. Consumer devices such as TVs and internet radios access the apps. Many older devices do not support certain HTTP headers and exhibit errors when these headers are present in responses. The company has configured an on-premises load balancer to remove the unsupported headers from responses sent to older devices, which the company identified by the User-Agent headers.

The company wants to migrate the service to AWS, adopt serverless technologies, and retain the ability to support the older devices. The company has already migrated the apps into a set of AWS Lambda functions.

Solution will meets these requirements is Create an Amazon CloudFront distribution for the metadata service. Crate an ALB. Configure the CloudFront distribution to forward requests to the ALB. Configure the ALB to invoke the correct Lambda function for each type of request. Create a CloudFront function to remove the problematic headers based on the value of the User-Agent header.

CloudFront function is faster/light-weight than Lambda@Edge.

- A retail company needs to provide a series of data files to another company, which is its business partner. These files are saved in an Amazon S3 bucket under Account A, which belongs to the retail company. The business partner company wants one of its IAM users, User_DataProcessor, to access the files from its own AWS account (Account B).

Steps the companies must take so that User_DataProcessor can access the S3 bucket successfully are:- In Account A, set the S3 bucket policy to allow access to the bucket from the IAM user in Account B. This is done by adding a statement to the bucket policy that allows the IAM user in Account B to perform the necessary actions (GetObject and ListBucket) on the bucket and its contents.

Code:{ "Effect": "Allow", "Principal": { "AWS": "arn:aws:iam::AccountB:user/User_DataProcessor" }, "Action": [ "s3:GetObject", "s3:ListBucket" ], "Resource": [ "arn:aws:s3:::AccountABucketName/*" ] } - In Account B, create an IAM policy that allows the IAM user (User_DataProcessor) to perform the necessary actions (GetObject and ListBucket) on the S3 bucket and its contents. The policy should reference the ARN of the S3 bucket and the actions that the user is allowed to perform.

.Code:{ "Effect": "Allow", "Action": [ "s3:GetObject", "s3:ListBucket" ], "Resource": "arn:aws:s3:::AccountABucketName/*" }

- In Account A, set the S3 bucket policy to allow access to the bucket from the IAM user in Account B. This is done by adding a statement to the bucket policy that allows the IAM user in Account B to perform the necessary actions (GetObject and ListBucket) on the bucket and its contents.

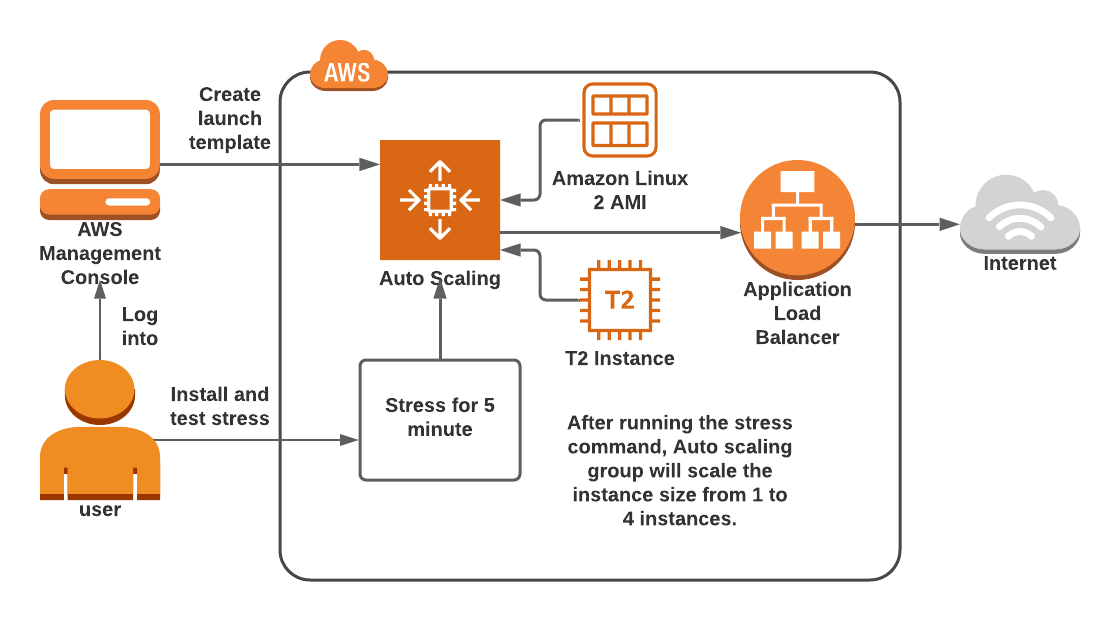

- A company is running a traditional web app on Amazon EC2 instances. The company needs to refactor the app as microservices that run on containers. Separate versions of the app exist in two distinct environments: production and testing. Load for the app is variable, but the minimum load and the maximum load are known. A solution architect needs to design the updated app with a serverless architecture that minimizes operational complexity.

Solution will meet these requirements MOST cost-effectively is Upload the container images to Amazon ECR. Configure two auto scaled Amazon ECS clusters with the Fargate launch type to handle the expected load. Deploy tasks from the ECR images. Configure two separate ALB to direct traffic to the ECS clusters.

EKS requires paying a fixed monthly cost of approximately $70 for the control plane plus additional fees to run supporting service.

Code:https://www.densify.com/eks-best-practices/aws-ecs-vs-eks

- A company has a multi-tier web app that runs on a fleet of Amazon EC2 instance behind an ALB. The instances are in an Auto Scaling group. The ALB and the Auto Scaling group are replicated in a backup AWS Region. The minimum and maximum value for the Auto Scaling group is set to zero. An Amazon RDS Multi-AZ DB instance stores the app's data. The DB instance has a read replica in the Backup Region. The app presents an endpoint to end users by using an Amazon Route 53 record.

The company needs to reduce its RTO to less than 15 mins by giving the app the ability to automatically fail over to the backup Region. The company does not have a large enough budget for an active-active strategy.

To meet these requirements a solution architect should recommend Creating an AWS Lambda function in the backup Region to promote the read replica and modify the Auto Scaling group values. Configure Route 53 with a health check that monitors the web app and sends an Amazon Simple Notification Service (SNS) notification to the Lambda function when the health check status is unhealthy. Update the app's Route 53 record with a failover policy that routes traffic to the ALB in the backup Region when a health check failure occurs.

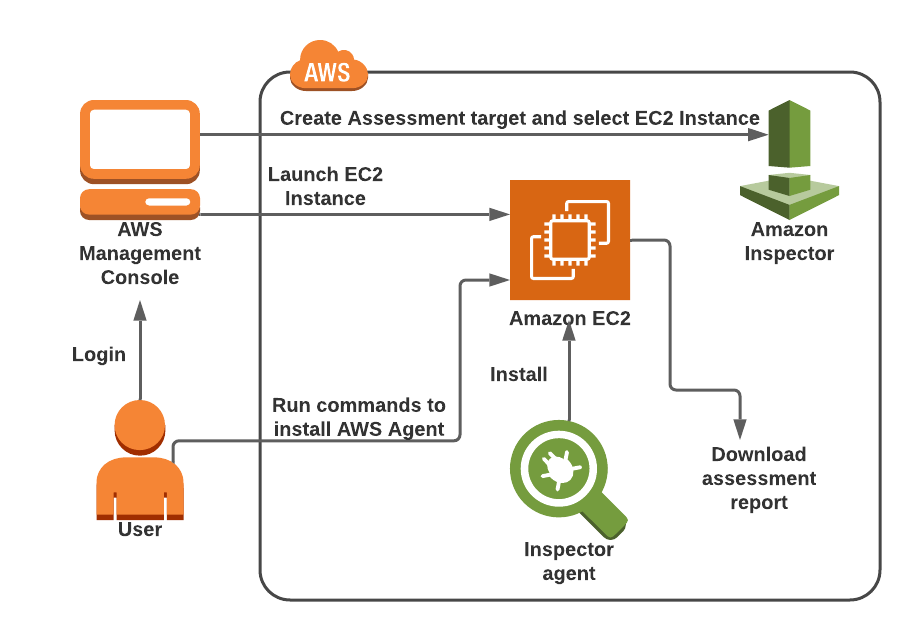

- A company is hosting a critical app on a single Amazon EC2 instance. The app uses an Amazon ElastiCache for Redis single-node cluster for an in-memory data store. The app uses an Amazon RDS for MariaDB DB instance for a relational DB. For the app to function, each piece of the infrastructure must be healthy and must be in an active state.

A solutions architect needs to improve the app's architecture so that the infrastructure can automatically recover from failure with the least possible downtime.

Steps will meet these requirements are:- Use an ELB to distribute traffic across multiple EC2 instances can help ensure that the app remains available in the event that one of the instances becomes unavailable. Ensure that the EC2 instances are part of an Auto Scaling group that has a minimum capacity of two instances.

- Modify the DB instance to create a multi-AZ deployment that extends across two AZs.

- Create a replication group for the ElastiCache for Redis cluster. Enable multi-AZ on the cluster.

Last edited: